Why You Need 10-Bit 4:2:2 and S-Log? (Chroma Subsampling and Bitness Explained)

Guide: What is 10-bit 4:2:2 video and S-Log and why do you need it?

I’ll say this:

A lot of people seem to want to have 10 bit 4:2:2 in the camera, but many don’t really understand what it is and why you need it. Here’s what to expect here…

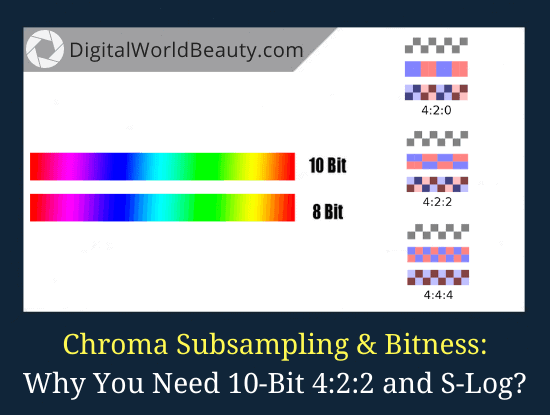

- chroma subsampling – 4:4:4 vs 4:2:2 vs 4:2:0

- the difference between 10 bit 4:2:2 and 8 bit 4:2:0 and why you shouldn’t bother

- S-Log explained

Now, this is for those who are already well versed in technology and will understand the topic at hand. If you’re a newbie, you will get even more confused.

Without further ado, let’s get started.

Don’t Get Caught Up with Numbers and Specs

First things first:

Remember that while technical characteristics are important, it’s not everything. And when Phillip Bloom tweeted about his video that he created several years ago in 8 bit 4:2:0 AVCHD, he said exactly that.

Don’t get caught up with numbers and specs!

If you’re curious, here’s a video that he was referring to in his tweet, “Portrait of a boxer”:

I thought I’d remind you of this before moving on to 4:2:2, 4:2:0 and so forth.

Chroma Subsampling – 4:4:4 vs 4:2:2 vs 4:2:0

So…

4:2:2 is a chroma subsampling that is used in many video encoding schemes.

There is also a 4:4:4 chroma subsampling, which is an uncompressed format where the level of luminance and chroma is set for each individual pixel and no color subsampling is used at all.

In theory, 4:2:2 transmits 2 times more information about the color, more precisely, it throws out 2 times less information about the color, because if it didn’t throw it out at all, then it would be 4:4:4.

Many folks would confirm that the difference isn’t really noticeable. Even if you shoot against a green background (for which we highly recommend 4:2:2), the difference was subtle.

The only thing your (experienced) eye might notice is a more saturated red color, and also, due to the fact that codecs (for example, ProRes 422) record at a higher bitrate, so we see much clearer contours and less color chaos on moving objects…

(But you can only notice this during a pause. If you were to watch the video, then you will never notice any of this. And it has nothing to do with 4:2:2 subsampling.)

By the way, this determines the whole essence of highly efficient encoding.

All these motion prediction systems and vector coding boil down to the fact that you can compress a lot, in a way that the eye doesn’t notice it. This is what makes a photo different from a video. In the photo, you will immediately see the difference between the JPEG 95% and JPEG 60%, but in the video (motion) the difference won’t be visible.

Dave Dugdale had an interesting video, where you’ll learn that the difference is noticeable only at extreme values of color correction, when the image is already completely destroyed, for example, when you correct it by 5 stops of exposure.

But that’s not the most interesting thing.

What’s interesting is that if you shoot 4K 4:2:0, then compressing it to 1080 you get 4:2:2.

It is because this technology itself is based on the fact that every second luma or chroma signal is thrown out, which basically means that data about neighboring pixels is thrown out. But by compressing 4K video in 2 times, we therefore restore that lost color information.

Of course, it all depends on the signal processing methods in editing software, but in one of his videos, Dave has demonstrated that 4K 4:2:0 compressed to Full HD looks better than an original initially recorded in Full HD 4:2:2.

Pretty awesome, eh?

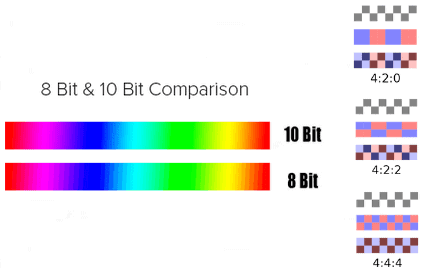

What Is Bitness and How Does It Work?

This is the part where it gets tricky.

Bitness determines how many gradations of brightness a colour pixel can have. DIYPhotography.net explains it this way:

“The bit depth is noted in binary digits (bits), and relates to how many different brightness levels are available in each of the three red, green and blue colour channels.”

8 bit is only 256 values, and 10 bit is already 1024.

But you only need bit depth if the video is subjected to strong colour correction, for example, when you shoot in S-log or any other profile with a logarithmic gamma curve. And this is noticeable on homogeneous areas of the same colour. After colour grading, the gradients follow a ladder (also called banding).

But if you shoot video at least in the same neutral profile, then even after colour correction your video will not have these defects. But if you shoot in LOG – then 10 bits is a vital necessity, and S-Log at 8 bit is more a minus than a plus, but marketers accustom us to the opposite.

This is why, for example, the Canon 1Dx Mark II does not have a C-LOG mode (this is what Canon calls it). It shoots in 8 bits, and many complained about exactly these problems on its predecessor, Canon 1Dc, which could shoot LOG, but was also 8-bit.

[amalinkspro type=”showcase” asin=”B01B6OTTIM” apilink=”https://www.amazon.com/dp/B01B6OTTIM?tag=lifinkaisty-20&linkCode=osi&th=1&psc=1″ new-window=”true” addtocart=”false” nofollow=”true” sc-id=”4″ imgs=”LargeImage” link-imgs=”false” specs=”Fastest shooting EOS-1D, capable of up to 14 fps full-resolution RAW or JPEG, and up to 16 fps in Live View mode with new Dual DIGIC 6+ Image Processors~~~Achieves a maximum burst rate of up to 170 raws in continuous shooting at up to 16 fps, and 4K movies using CFast cards in the new CFast 2.0 slot~~~Improved AF performance through 61-point, wide area AF system with 41 cross-type points, improved center point focusing sensitivity to -3 EV and compatibility down to f/8~~~~~~Use the EOS Utility Webcam Beta Software (Mac and Windows) to turn your Canon camera into a high-quality webcam, or do the same using a clean HDMI output.” btn-color=”#ff9900″ btn-text=”Buy Now on Amazon” alignment=”alignnone” hide-prime=”0″ hide-image=”0″ hide-price=”1″ hide-button=”0″ width=”750″]Canon EOS-1DX Mark II DSLR Camera (Body Only)[/amalinkspro]

And don’t forget another important point:

Most content displays are 8-bit, including monitors, TVs, and smartphones. Some use honest 8-bit sensors, some use 6-bit ones with frame rate control (FRC), but it’s a fact: all distribution for the consumer goes in 8 bits. All content in HD, 4K – by satellite, by cable, all this is in 8 bits.

So if you don’t shoot in log and don’t do very strong color correction, then 8 bit is enough for you. For example, in order not to remove overexposure in the sky, it is enough to use polarizing or gradient filters on the set.

What About S-Log?

Now, let’s talk about S-Log.

In general, S-Log is good, but only at 10 bits. Also S-log (v-log, c-log) is good for cameras with high light sensitivity. (So, if you were to use the GH5 in the log mode, then there would be a lot of noise even at rather low ISO values.)

You might also be careful logging on DJI camera drones.

In these modes, their image in the shadows just turns into a mess, so try shooting in the usual neutral profile on consumer copters with a small sensor. As for the Phantom 4 Pro with its 1-inch sensor, I can’t say anything about it for certain, but judging by the reviews from the shadows, all this does not matter anyway.

One more thing:

If you ever shot with a logarithmic gamma curve, you’ll know that it’s absolutely impossible to rely on the readings of the exposure meter. You have to shoot with “overexposure” of one and a half to two stops of exposure.

And this sometimes plays a cruel joke, for example, on wedding events. That is, if you have many frames shot in different conditions, you can’t just apply one adjustment layer to all clips as you like it. You have to work on every clip shot in Log individually.

This takes up a huge amount of time for editing process, so try using Log for short shoots and in conjunction with a recorder capable of doing 10 bits.

Don’t forget that when shooting in Log modes, some cameras also use a different color table, the Color Matrix.

As a result, if you didn’t pay attention to this and didn’t set the usual color scheme in the settings, then, for example, on the Canon C100 camera the green color becomes turquoise.

To get the correct colors, you need to use the correct LUT transformation, but here, due to the lack of information about the color in the codecs with compression (H264), there is simply color destruction and some colors start to fall into squares.

This being said, the main piece of advice is to apply LOG thoughtfully and carefully; practice shooting in this mode on simple filming, understand the capabilities of your camera and whether you can do good color correction; and never shoot important projects without checking LOG.

Thoughts on 10-Bit 4:2:2 and S-Log?

Now…

I’d like to hear from YOU:

- What are thoughts on 4:4:4 vs 4:2:2 vs 4:2:0 chroma subsampling?

- Do you now understand 10 bit 4:2:2 vs 8 bit 4:2:0 and when to use S-Log?

Let us know your thoughts in the comments below!

==> Material translated from Andrei Karasyov blog. (Very knowledgeable guy when it comes to chroma subsampling, bitness and log modes.)

That article is short but helpful. I made all my videos in neutral mode and once made a video (personal use, I am noob) in s-log because I thought I might get better results. But it came out as crap. This article explains why. Thank you.

Glad to share, David!

There’s many great informations in this article, really useful for intermediates !

But there’s a few misinformations too.

4:2:2 isn’t important when shooting in basic conditions, but when you’re shooting on a green screen for example, it’s vital if you don’t want a horrible line visible around your subject. This line is due to the subsampling of the colors, which creates a mix of colors near the subject, and not the green required.

Also, there’s not a lot of noise on a color graded footage of the GH5 in V-Log L. If you expose it correctly, there shouldn’t be any noise at all. It’s been designed to avoid bringing to much noise. To understand this, there’s a great article on the subject from the founder of Zcam.

Hi Nathan, just saw your comment. I’m always learning and improving, and appreciate you stopping by and sharing this additional resource to help the website readers further. Thank you!